Mobile farm monetization is the process of using managed mobile device infrastructure to support revenue-linked workflows such as app testing, campaign QA, account operations, content review, or mobile task delivery. Treat it as an operating model, not a promise of passive income. The model only works when the workflow, cost, policy, and review process are clear.

The direct answer is simple. A mobile farm can support monetization when it creates repeatable mobile capacity for a legitimate business task. The value comes from throughput, handoff, device control, and workflow visibility. The risk comes from vague goals, weak cost tracking, platform-rule issues, and poor device-state management.

For teams, the better question is not “How many phones can we run?” The better question is “Which mobile workflow creates enough value to justify a controlled device pool?” A phone farm or cloud phone setup is useful only when the task is real, repeatable, measurable, and reviewable.

Key Takeaways

- Mobile farm monetization starts with a real workflow, not with device count.

- Revenue must be compared against device cost, labor, routing, tooling, review, and recovery work.

- Good fits include QA, campaign testing, mobile operations, review tasks, and controlled account workflows.

- Weak fits include unclear offers, platform-rule avoidance, unmanaged automation, and tasks with no measurable output.

What Is Mobile Farm Monetization?

The first step is to separate the phrase from the hype. A device pool does not automatically produce revenue. Operators use mobile infrastructure to run work that has a business outcome.

That work may be direct or indirect. Direct work might include paid mobile testing, app workflow review, or managed account operations for clients. Indirect work might include campaign QA, mobile landing-page checks, social workflow support, or support reproduction that protects revenue elsewhere.

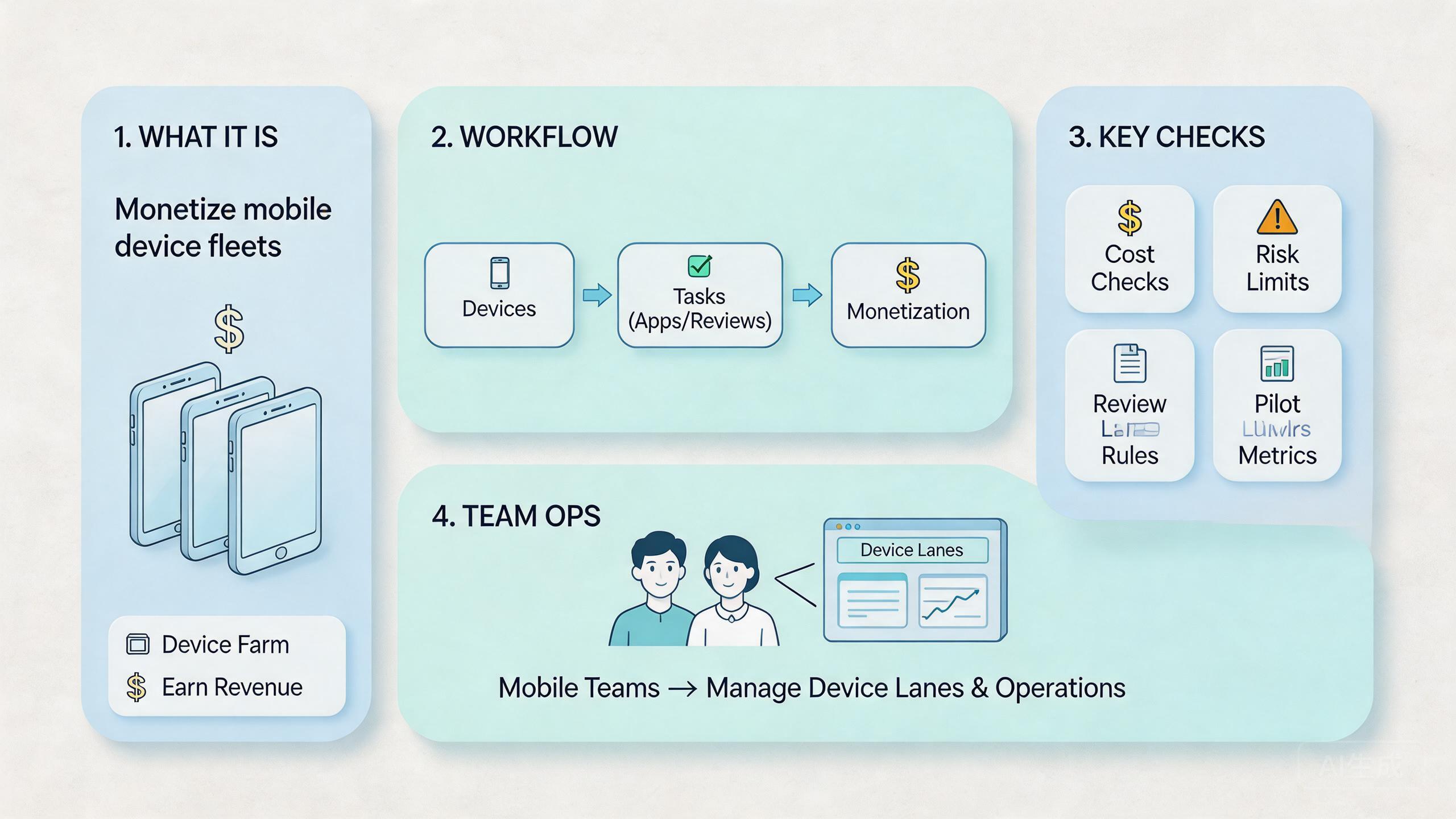

A practical mobile farm monetization guide should start with four parts:

- Workflow: what repeated mobile task will the team run?

- Capacity: how many device lanes are needed for that task?

- Economics: what cost and labor sit behind each run?

- Controls: how will the team track results, recover failures, and stay within rules?

The workflow is the anchor. A mobile farm without a defined workflow becomes a pile of screens. A small pool with a clear job can be more useful than a large pool with vague intent.

The capacity plan comes next. Device count should follow workload shape. A team running campaign QA may need fewer devices than a team running parallel mobile testing. A team handling client operations may need stricter separation than a team doing internal review.

Economics should be plain. Count device rental or ownership cost, staff time, network cost, software tools, reset work, failed runs, and management overhead. A revenue figure is not meaningful until those costs are visible.

Controls protect the model. Google Search Central's helpful content guidance reminds site owners to create content for people, not to manipulate systems (Google Search Central). The same operating principle applies here. A mobile farm should support real work, not try to exploit platform gaps.

Why Mobile Farm Monetization Matters

Mobile workflows can become operational bottlenecks. One person with one local phone may handle a small task. A business team often needs several people to run, review, and repeat mobile work without losing state.

Monetization matters when better mobile execution affects revenue. A SaaS team may need repeat mobile QA before launch. An agency may need cleaner client workflow separation. A support team may need to reproduce mobile issues faster. A marketing team may need to check campaigns from mobile sessions before spend increases.

Each case has a different value path. QA may reduce release risk. Campaign checks may reduce wasted spend. Client operations may create billable service capacity. Support reproduction may reduce churn pressure. The device pool is not the business model. It is the infrastructure behind the business model.

This distinction prevents bad decisions. Buying more devices can look like growth, but unmanaged capacity often creates hidden costs. Operators need training. Devices need state rules. Routes need records. Failed runs need recovery. Managers need review.

| Monetization path | Value driver | Main control needed |

|---|---|---|

| Mobile QA | Faster test coverage | Clean device state and repeatable cases |

| Campaign review | Better pre-launch checks | Approval logs and route notes |

| Client operations | Billable execution capacity | Client lane separation |

| Support reproduction | Faster issue review | Device history and reset rules |

The best teams treat monetization as a measurement problem. Each lane has a cost. Each run has an output. Some failures are normal, while others mean the workflow should pause.

Google's SEO Starter Guide advises making pages clear and useful for users (SEO Starter Guide). That principle is useful beyond SEO. A mobile farm operation should also be clear enough that another person can understand the lane, the task, the result, and the next action.

The review loop should also separate gross value from usable value. A workflow may appear profitable before labor and failed runs are counted. A more honest view subtracts retry time, device reset time, routing checks, review work, and manager oversight.

This is where many early models break. Managers count completed tasks but not cleanup. They count revenue but not support time. They count devices but not idle capacity. Those gaps can make a weak workflow look stronger than it is.

Key Benefits and Use Cases

The biggest myth is that monetization comes from scale alone. Scale helps only after the workflow is proven. Before that, extra devices can multiply unclear work.

A better model is workflow-first. Choose one task where mobile capacity clearly changes the result. Then build device lanes, role rules, and measurement around that task.

Common use cases include:

- App and workflow testing: teams can test mobile steps across repeated Android sessions.

- Marketing campaign QA: teams can check landing pages, forms, links, and mobile display before launch.

- Managed client operations: agencies can separate client work into lanes with cleaner ownership.

- Support and bug reproduction: teams can recreate mobile issues in a controlled environment.

- Social workflow review: teams can inspect mobile-first content or account workflows with shared access.

Each use case needs a different control level. Testing may need clean reset rules. Client work may need strict lane separation. Marketing QA may need approval records. Support reproduction may need screenshots, device history, and clear issue notes.

MoiMobi should be understood as execution infrastructure for these workflows. A cloud phone gives remote mobile access. Device isolation helps separate workspaces. Mobile automation can handle selected repeat steps once the process is stable.

Strong fit usually appears when three conditions are true. The task repeats often. The output can be reviewed. The cost per run can be tracked. Without those conditions, the team may not know whether the device pool is making money or hiding waste.

Weak fit appears when the plan depends on vague traffic, unclear platform behavior, or unsupported income claims. A mobile farm should not be used to avoid rules, inflate metrics, or run work that the team cannot explain to a client, partner, or platform.

How to Get Started with Mobile Farm Monetization

Begin with a narrow pilot. The first pilot should prove the model, not the maximum capacity. A small test with clear metrics is safer than a large rollout with unclear costs.

Use this sequence:

- Choose one monetizable workflow. Pick a task with a clear business link, such as mobile QA, campaign checking, or client account operations.

- Define the output. Decide what a successful run produces. It may be a report, a screenshot set, a checked workflow, or an approved task.

- Assign device lanes. Keep each lane tied to one workflow, client, region, or campaign group.

- Set role boundaries. Operators run the task. Reviewers inspect results. Admins control reset, routing, and lane changes.

- Track real cost. Include device cost, labor, tools, route cost, failed runs, and review time.

- Create a recovery rule. Decide when a device is ready, under review, reset needed, or quarantined.

- Review before scale. Expand only when the pilot is repeatable and the numbers still make sense.

The highest-risk step is cost tracking. Teams often count only revenue and device cost. That misses labor, retries, review time, and management overhead. Those costs can decide whether the workflow is useful.

A simple pilot sheet can work at the start. Track lane name, device ID, operator, route policy, task result, time spent, cost estimate, and recovery state. The record does not need to be complex. It needs to be consistent.

Connect the pilot to multi-account management only when the workflow needs separate account lanes. Do not mix unrelated accounts or clients in one pool just to save time. Messy sharing often creates later review work.

When automation is involved, start with low-risk steps. State capture, status checks, file uploads, and report formatting are easier to review than broad account actions. Wider automation should wait until the team can explain failures quickly.

Cost Model for Mobile Farm Monetization

A useful cost model is simple enough to maintain every week. It should not depend on perfect accounting. It should capture the costs that change the decision.

Begin with fixed costs. These may include the device pool, platform tools, team access, and base management time. Fixed costs matter because they continue even when the workflow is quiet.

Add variable costs next. These may include operator time, review time, retries, routing resources, storage, screenshots, reports, and manual cleanup. Variable costs matter because they rise with volume.

Then count failure cost. A failed run may require a reset, another review, a repeated task, or a client explanation. A failure is not only a technical event. It consumes time and trust.

Use a plain formula:

- Usable value per run = business value minus direct run cost.

- Net workflow value = usable value minus review, retry, and overhead cost.

- Expansion signal = net value stays positive after repeat runs and handoff.

This formula is not meant to replace finance work. Operators can use it as a decision check. A team that cannot estimate these numbers should not scale the pool yet.

The cost model also helps with pricing. Agencies and service teams can avoid undercharging when they see the real work behind each run. Internal teams can decide whether the workflow saves enough time to justify the device pool.

Governance and Policy Review

Governance keeps mobile farm monetization from drifting into risky behavior. A workflow should have a named owner, a rule boundary, and a stop condition. Those three items make the work easier to defend and easier to pause.

The owner decides who can change the lane. The rule boundary defines what the team may do. The stop condition explains when the workflow must pause for review. This does not need to be a long document. It needs to be clear.

Use a short governance checklist before expansion:

- What business task does this lane support?

- Which account, client, or campaign owns the lane?

- Which actions are allowed and which actions require approval?

- Which platform or client rules apply?

- What evidence proves the run was completed?

- What failure requires reset, review, or shutdown?

These questions reduce ambiguity. They also help new operators join the workflow without copying hidden habits from one experienced person.

For teams that rely on proxy network routing, governance should include route notes. Every operator should know which route class belongs to which lane and when a route change requires approval.

Policy review should be treated as part of the workflow, not as an afterthought. Business tasks that touch app stores, ad platforms, social platforms, or client accounts need rule checks before scale.

Common Mistakes to Avoid

The first mistake is starting with a revenue target instead of a workflow. A target can guide planning, but it cannot define the work. Each team still needs to know what every device lane does and why it creates value.

Another mistake is ignoring policy risk. Platforms, marketplaces, app stores, ad networks, and client contracts may limit certain behavior. Teams should review official rules and client requirements before they scale. Google Play policy resources are a useful reminder that platform rules remain important regardless of tooling (Google Play Policy Center).

Device mixing is also common. One pool gets used for testing, marketing, client work, and support. That may feel efficient for a week. Later, nobody knows which state belongs to which workflow. Separate lanes reduce confusion.

Flat access creates quiet problems. When everyone can run, reset, change routing, and approve results, mistakes become harder to trace. Role separation keeps accountability clearer.

Poor recovery rules are another hidden cost. A failed run should not be followed by guessing. Mark the device state. Note what failed. Decide whether to reset, review, or quarantine. Return the device to service only when the owner can explain the state.

The final mistake is scaling before the pilot has repeat data. One good run is not enough. Run the same workflow several times. Hand it to another operator. Check whether the result is still understandable. Only then does expansion become a business decision rather than a gamble.

Pilot Metrics and Review Loop

A monetization pilot needs a short review loop. The goal is to prove whether the device pool helps the business task. Vanity numbers are not enough.

Track five metrics first:

- Revenue or value per completed run: what business result does the run support?

- Cost per run: how much device, labor, tooling, and review cost went into it?

- Failure rate: how often did the run need retry, reset, or manual repair?

- Handoff time: how long did another operator need to continue the work?

- Review clarity: could a manager understand the result without private context?

These metrics do not need a large system on day one. A shared sheet may be enough. The important part is that the same fields are completed every time.

Review the pilot after a fixed number of runs. Ten clean runs may teach more than one large batch. Look for patterns. Which lane fails most often? Which step takes the most labor? Which device state causes the most confusion?

The decision gate should be strict. Expand when value is clear, cost is visible, and recovery is boring. Pause when results depend on one expert, hidden notes, or manual cleanup that nobody counts.

Managers should also review idle time. A device pool that sits unused may still cost money. A pool that stays busy with low-value work may also waste money. Utilization only matters when the work itself is useful.

The strongest review is plain. Keep the lane if it creates measurable value and the team can recover failures quickly. Fix the lane if value is unclear but the workflow is important. Stop the lane if the work is vague, risky, or too costly to review.

Fit Boundaries for Mobile Farm Monetization

This model works best when the task is repeated, reviewable, and tied to a real business outcome. It does not work well when the plan depends on unclear behavior or unsupported income claims.

Strong fit includes mobile QA, campaign QA, internal workflow support, support reproduction, and managed operations where clients understand the work. Medium fit includes social media operations where some steps can be standardized but others need human judgment. Weak fit includes vague “make money with phones” plans that lack a defined customer, output, or policy review.

The strongest operators can explain the model in plain terms. They can name the customer, task, device lane, review point, and cost. They also know what will stop the workflow.

Use local devices when physical inspection matters. Use remote phones when access, scale, separation, and handoff matter more. Use a hybrid setup when a team needs both hardware checks and remote review.

This boundary keeps the system realistic. A mobile farm is not a business by itself. It is infrastructure. The business comes from the workflow it supports.

Another boundary is skill. A team needs people who can operate the workflow, inspect results, and handle resets. Tools cannot replace that judgment. They can make the work easier to organize.

The last boundary is reputation. A short-term run that damages trust is not a good monetization model. Teams should prefer repeatable service quality over noisy activity. That is usually the more durable path for business operations.

Frequently Asked Questions

What is mobile farm monetization?

It means using managed mobile device capacity to support revenue-linked work. The work may include testing, campaign checks, client operations, or support review.

Is mobile farm monetization passive income?

Usually no. Real workflows require setup, review, monitoring, cost tracking, and recovery. Treat it as operations, not passive income.

What should a team measure first?

Measure cost per run, value per completed run, failure rate, handoff time, and review clarity. Those numbers show whether the workflow is real.

How many devices should a pilot use?

Use the smallest pool that can prove one workflow. Add more devices only after the pilot is repeatable.

Can automation improve monetization?

Automation can help after the process is stable. Begin with narrow tasks such as checks, capture, and reporting before broader actions.

What is the biggest risk?

The biggest risk is unclear work. A team that cannot explain the workflow, cost, rule boundary, and recovery path will create more problems by scaling.

Does a mobile farm replace local phones?

Not always. Local phones still matter for physical testing. Remote phones work better for shared access, parallel review, and repeat operations.

When should the team stop a pilot?

Stop when costs are unclear, failures repeat, policy questions remain unresolved, or results depend on one person's private knowledge.

Conclusion

The model is useful only when it supports a real workflow with measurable value. Device count is not the strategy. The strategy is a controlled operating model with clear lanes, roles, costs, review, and recovery.

A narrow pilot is the safer path. Choose one workflow, define the output, assign device lanes, track cost, and review results after repeat runs. Clear numbers support expansion. Vague numbers mean the workflow needs repair before more devices are added.

For MoiMobi users, the next step is to map one mobile workflow against the infrastructure stack: cloud phones for access, device isolation for lane separation, routing rules for consistency, and automation only for repeatable steps. Monetization becomes more realistic when the team can explain the work, measure the result, and recover from failure without guessing.