A redfinger alternative is a cloud phone or mobile execution platform that better matches a team's workflow, review model, routing needs, and account operating rules.

The best choice for multi-account teams is not the tool with the loudest feature list. The stronger option keeps account lanes apart, access controlled, routes explainable, and recovery steps visible. For many teams, that means reviewing cloud phones as a work system instead of treating them as remote Android screens.

The selection rule is direct. Choose the platform that makes daily account work easier to assign, inspect, and repeat. If a platform gives operators access but leaves managers guessing about device state, ownership, or route history, it may create more overhead as the account pool grows.

MoiMobi should be considered when the team needs cloud phones, device isolation, proxy routing, and workflow handoff in one operating model. Redfinger may still fit some individual or light remote-phone use cases. The point is not to make unsupported claims about any competitor. The point is to compare the operating requirements that matter for multi-account teams.

Key Takeaways

- A redfinger alternative should be judged by team workflow, not only remote access.

- Multi-account teams need device pools, role boundaries, routing notes, and recovery checks.

- The strongest comparison starts with use case fit before feature fit.

- MoiMobi is positioned as a mobile execution system for team-scale work.

- A small pilot is the best way to validate fit before moving account pools.

A practical comparison framework for a redfinger alternative

The most useful comparison starts with the job the platform must do. A solo user may only need a remote Android device. A multi-account team needs a system for repeated work across accounts, operators, reviewers, and device states.

Use four comparison questions before reviewing features:

- Can the team separate account lanes clearly?

- Can managers control who operates, reviews, and resets devices?

- Can routing behavior be explained later?

- Can failed lanes be isolated without stopping the whole workflow?

These questions matter because account work becomes harder when responsibility is informal. One operator may know which device is clean. Another may know which route was used. A third may know which account group should not be touched. That knowledge does not scale if it only lives in chat history.

Google’s guidance for helpful content emphasizes value, reliability, and usefulness for people rather than thin claims (Google Search Central). The same idea applies to vendor comparison. A useful buying decision should explain what changes in daily work, not only list product categories.

For a redfinger alternative, the comparison should therefore focus on work outcomes. Can the team reduce account mix-ups? Can it see which device belongs to which workflow? Can it pause a problem lane and recover it with less guessing? Those answers are more useful than a generic “cloud phone vs cloud phone” checklist.

The framework also avoids a common trap. Teams sometimes compare platforms by counting screens, sessions, or headline automation language. Those numbers can matter, but they do not answer whether the system is manageable. A smaller controlled pool can be more useful than a larger pool with weak ownership.

A redfinger alternative should therefore be tested against the buyer's real account process. The useful question is not “Which product has more labels?” The useful question is “Which product makes our next workday easier to run and review?”

Use case fit before feature fit in a redfinger alternative

Feature comparison becomes clearer after the use case is defined. Multi-account teams usually need one of several operating patterns: social media operations, mobile QA, app workflow review, regional account checks, or support investigation. Each pattern has different tolerance for complexity.

Social media teams often care about account grouping, clean handoff, and review visibility. They may need separate cloud phone pools for different brands, markets, or operator groups. The risk is not only a device being unavailable. The larger risk is losing track of which account lane was changed and why.

QA teams usually care about repeatability. They need to confirm that the same workflow can run again under controlled conditions. For this use case, cloud phones should be judged by device state clarity, reset process, and whether testers can reproduce app behavior without rebuilding setup every time.

Support teams care about investigation speed. They need to inspect a mobile flow, reproduce a reported issue, and document what changed. A platform that supports clearer device history and review notes can reduce back-and-forth.

Google Play policy guidance is also relevant when account work touches apps or platform behavior. Teams still need to follow platform rules and user safety expectations (Google Play Policy Center). A cloud phone platform can improve control, but it does not replace policy responsibility.

The right redfinger alternative should match the use case before the team studies advanced features. Undefined workflows make every product look similar. Clear workflows make the differences easier to see.

Redfinger alternative comparison matrix for account teams

The matrix below keeps the comparison grounded in operating needs. It does not assume private competitor details. It shows what multi-account teams should validate during product evaluation.

| Decision area | What to check | Why it matters for teams |

|---|---|---|

| Device pools | Can accounts be grouped by workflow, market, or owner? | Prevents mixed state and vague responsibility. |

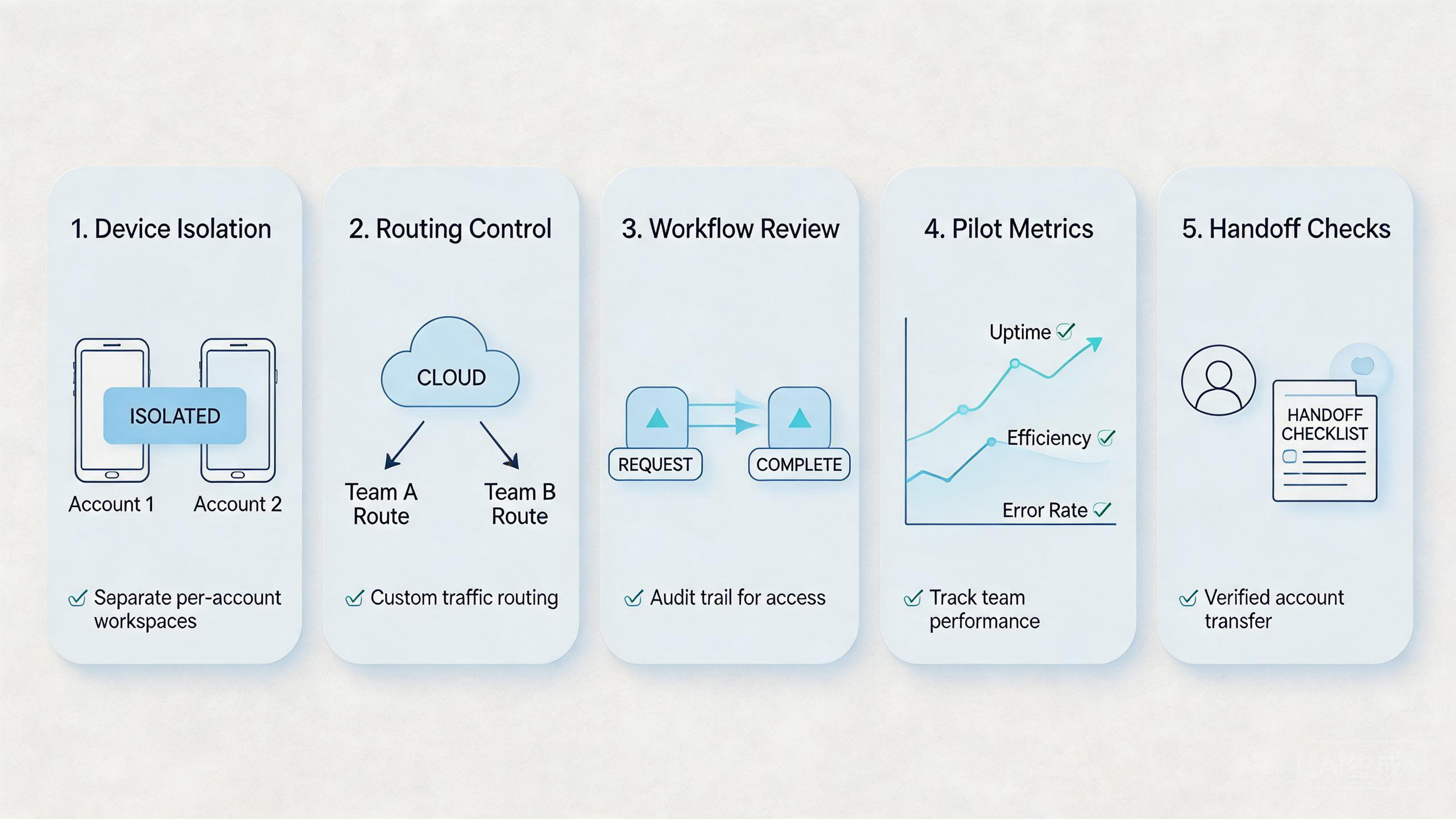

| Device isolation | Can account lanes stay separated enough for review? | Reduces confusion between unrelated workflows. |

| Routing control | Can route assumptions be recorded and repeated? | Makes troubleshooting easier after a failed run. |

| Access roles | Can operators, reviewers, and admins have different permissions? | Limits accidental changes and improves accountability. |

| Recovery process | Can a bad lane be paused, reset, and returned clearly? | Keeps one issue from spreading across the pool. |

| Automation fit | Can repeatable steps connect to the device layer? | Helps teams scale work without hiding responsibility. |

| Reporting habit | Can managers inspect status without asking every operator? | Improves handoff and daily review speed. |

This comparison helps teams avoid vague buying logic. A platform is not a better redfinger alternative just because it sounds broader. A product is better for a specific team when it reduces the team's current work friction.

For MoiMobi, the strongest fit appears when the buyer needs cloud phone capacity as part of a larger account workflow. That may include device isolation, proxy network planning, and multi-account management.

The comparison should also include what the team does not need. A buyer who only wants occasional remote Android access may not need a heavier team workflow system. A buyer who runs shared account work every day may need more control than lightweight remote access provides.

This is where the redfinger alternative decision becomes more concrete. The right choice should match the account team's daily handoff pattern, not an abstract feature list.

Work trade-offs and team workflow

Every platform choice creates trade-offs. A simple remote phone setup can be faster to start. A structured cloud phone workflow can take more planning but may reduce confusion later. The correct choice depends on team size, account sensitivity, review needs, and recovery cost.

For a multi-account team, the main trade-off is usually setup discipline versus daily chaos. A disciplined setup asks the team to define pools, roles, routes, and reset rules. That work takes time. It also gives operators a clearer path during daily execution.

Workflow handoff is one of the most important tests. Ask what happens when one operator stops and another continues. Does the second person know which account lane to use? Can they see whether the device is clean? Do they know whether route behavior changed? Answers that require several private messages show that the platform is not carrying enough work context.

A redfinger alternative for team use should reduce those private-message checks. The platform should make lane status, device owner, and review state easier to see before work continues.

The same issue appears during review. A lead may need to inspect twenty account lanes at the end of a day. The lead should not depend on memory or scattered notes. A practical system should make lane status visible enough to decide what is active, paused, or ready for reset.

Account work also needs cautious language around results. No platform can remove every platform, app, policy, or work risk. Teams should avoid any vendor message that suggests account outcomes can be made automatic or certain. A good comparison rewards control and review, not impossible promises.

MoiMobi’s positioning is strongest when the buyer values stable execution. That includes parallel mobile access, device grouping, reusable workflows, and clean handoff. The platform should be judged by whether it supports those habits in the buyer's actual workflow.

Setup cost, ongoing cost, and team overhead

Cost is not only subscription price. For account teams, cost includes setup time, operator training, review time, error recovery, and the hidden expense of unclear ownership. A low-friction tool can become expensive if managers spend hours chasing device state.

Use a total operating cost view:

- Setup cost: time spent creating pools, access roles, route rules, and reset policies.

- Training cost: time required for operators and reviewers to use the system correctly.

- Review cost: time spent checking account lane status and work quality.

- Recovery cost: time spent isolating, resetting, and returning failed lanes.

- Change cost: effort needed when account groups, regions, apps, or workflows change.

This view makes the redfinger alternative decision more practical. A team may accept more setup work if it lowers recovery cost. Another team may prefer a simpler tool if its account volume is low and review needs are light.

Do not judge a redfinger alternative only by the first setup day. Judge it by the second month, when account volume, team habits, and exception cases reveal whether the process is still clear.

Team overhead should also be measured during a pilot. Track how many questions leads ask before they understand account status. Track how long it takes to move a lane from active to reviewed. Track how often a lane marked reusable needs follow-up cleanup.

These measures are more useful than a broad claim about which product is cheaper. Pricing and packaging can change. Workflow overhead is easier to test inside the buyer's own process.

Which option fits different teams best

The best option depends on the team's operating shape. A redfinger alternative should be matched to use case, not chosen from a generic ranking.

Solo operators and light remote access

A solo operator may not need a full team workflow system. The main requirement may be remote Android access, basic app use, and simple session continuity. In that case, the buyer should avoid overbuilding.

The fit question is simple: does the tool solve the immediate remote access need without adding unnecessary process? If yes, a lighter platform may be enough.

Small teams with repeated account work

A small team needs more structure than a solo user. It may still keep the process simple, but it should define device ownership, account grouping, and reset rules. Otherwise, informal habits become bottlenecks.

MoiMobi can fit this stage when the team wants to grow into a more controlled model. A small team can begin with a narrow pool and add process as the workflow stabilizes.

Agencies and account operations teams

Agencies usually need clearer separation. Different client accounts, workflows, markets, or operator groups should not blur together. Review quality also matters because multiple people may touch the same account lane.

For this group, a redfinger alternative should be judged by team handoff, pool design, route consistency, and audit readiness. The product should help managers see what happened without reconstructing the day from chat messages.

QA and product teams

QA teams often value repeatability more than raw account volume. They need to reproduce workflows, compare app behavior, and reset environments between test runs. The cloud phone layer should support consistent state review.

This use case connects naturally with mobile automation. Automation can run repeatable checks, while cloud phones provide the Android environment. The team still needs human review for judgment-heavy issues.

Teams with unclear account processes

Some teams should pause before switching tools. If account ownership is unclear, route rules are undocumented, and recovery depends on guesswork, a new platform may not solve the problem.

The better first step is process mapping. Write down the current account groups, owners, device states, route assumptions, and failure patterns. Then compare products against that map.

Pilot checklist before replacing a tool

A pilot reduces the risk of choosing based on assumptions. It also gives the buyer evidence from its own workflow.

Run the pilot with one account group and one operator team. Keep the scope narrow enough to inspect daily. The test should answer whether the alternative improves assignment, handoff, review, and recovery.

Use this pilot checklist:

- Define the account group. Choose accounts with a real recurring workflow.

- Build a small device pool. Keep it limited enough for daily review.

- Assign roles. Separate operators, reviewers, and admins.

- Record route assumptions. Document expected routing behavior for the pool.

- Track handoff time. Measure how long another operator needs to continue work.

- Track recovery time. Measure how long a failed lane takes to isolate and return.

- Review exceptions. Note every case where someone bypasses the normal process.

The pilot should end with a decision, not a feeling. Keep the alternative when it makes daily work easier to control. Rework the process when the tool exposes unclear ownership. Pause migration when the team cannot explain why the new workflow is better.

Migration planning without disrupting account work

Migration should be staged because account operations depend on continuity. Moving every lane at once creates avoidable uncertainty. A better plan moves one workflow, reviews the result, then expands only after the team can explain what improved.

Begin with account inventory. List the account groups, owners, current device setup, route assumptions, and known exceptions. This inventory prevents the team from moving hidden problems into the new platform without noticing.

Next, choose a test group that is important but not mission-critical. The pilot should be real enough to reveal workflow issues. It should not be so risky that the team rushes decisions under pressure.

Then map each account group to a lane design. Decide whether the group needs a dedicated cloud phone, a shared pool, or a temporary review lane. The answer may vary by account sensitivity, workload frequency, and review needs.

Migration also needs a rollback rule. A rollback is not a failure. It gives the team a controlled way to protect account work when the new setup exposes missing process. Define the trigger before the pilot starts. Examples include unclear ownership, repeated reset issues, or review notes that do not explain failures.

The handoff plan should be written in plain language. Operators need to know where to work, what to record, and when to pause. Reviewers need to know which signals matter before a lane returns to use. Admins need to know who can change route rules, reset devices, or retire a lane.

This written handoff plan is a practical redfinger alternative test. A platform that supports the plan is helping the team. A platform that forces workarounds may not fit the team's account model.

Governance checks for a redfinger alternative

Governance sounds heavy, but the useful version is simple. It means the team knows who can access account lanes, who can change settings, and who can approve recovery. Without those answers, the platform becomes harder to trust as account volume grows.

Access review is the first governance habit. Remove users who no longer need a pool. Keep admin rights limited. Separate operator access from reviewer access when the workflow allows it.

Change review is the second habit. Important changes should be visible enough to explain later. Route changes, account reassignment, app updates, automation edits, and reset decisions should not disappear into private messages.

Exception review is the third habit. A special workaround may be necessary for one account group. It becomes a problem when nobody records why the exception exists. Add a short note for each exception and revisit it during the pilot review.

The final governance check is policy awareness. Cloud phones can support cleaner execution, but they do not decide whether a workflow is acceptable for a platform. Teams should keep platform rules and app requirements in scope when they design account work.

These checks make the redfinger alternative decision more durable. The buyer is not only choosing where Android devices run. The buyer is choosing how account work will be assigned, controlled, reviewed, and recovered.

Frequently Asked Questions

What is a redfinger alternative for multi-account teams?

It is a cloud phone or mobile execution platform that better fits team account workflows. The focus is account lanes, role control, routing, review, and recovery.

Is MoiMobi always the best Redfinger alternative?

Not always. MoiMobi is a stronger fit when teams need a mobile execution system. A lighter tool may fit occasional remote Android access.

What should teams compare first?

Compare workflow fit first. Device pools, access roles, route control, and recovery process usually matter more than a broad feature list.

Should teams migrate all accounts at once?

No. A narrow pilot is usually more useful. Start with one account group, one pool, and one review loop.

Can a cloud phone platform remove account risk?

No. Teams still need platform policy awareness, good account practices, and careful review. Cloud phones improve control, but they do not remove responsibility.

How does device isolation affect the choice?

Device isolation helps teams keep account lanes separated and easier to review. It matters more when several operators handle different account groups.

What metrics should a pilot track?

Track setup time, handoff time, recovery time, review clarity, and exception count. These signals show whether the new system improves operations.

How should teams handle pricing comparisons?

Compare total operating cost, not only subscription price. Include setup time, training, review overhead, and recovery effort.

Conclusion

The best Redfinger alternative for multi-account teams is the platform that fits the team's operating model. Remote Android access is only the starting point. Team-scale account work also needs device pools, ownership, route clarity, role boundaries, and recovery checks.

MoiMobi is worth reviewing when the team wants cloud phones as part of a larger work system. It connects naturally with device isolation, proxy routing, account operations, and mobile automation. That makes it a practical option for teams that need controlled handoff instead of only individual remote access.

Before switching, run a small pilot. Pick one account group, define the workflow, assign a device pool, and measure handoff plus recovery. A pilot that reduces confusion and makes review easier shows useful fit. A pilot that stays confusing means the team should fix the operating model before moving more accounts.