Key Takeaways

- AI browser automation is the use of browser agents, scripts, and workflow rules to run repeatable web tasks with less manual work

- The value comes from cleaner handoff, repeatable steps, faster checks, and better evidence after a task fails

- Teams should treat browser agents as one layer in an execution system, not as a full replacement for operators

- Browser automation fits web dashboards, account checks, report pulls, queue review, and structured form work

- Mobile app workflows may need cloud phones, device isolation, or mobile automation instead of a browser-only setup

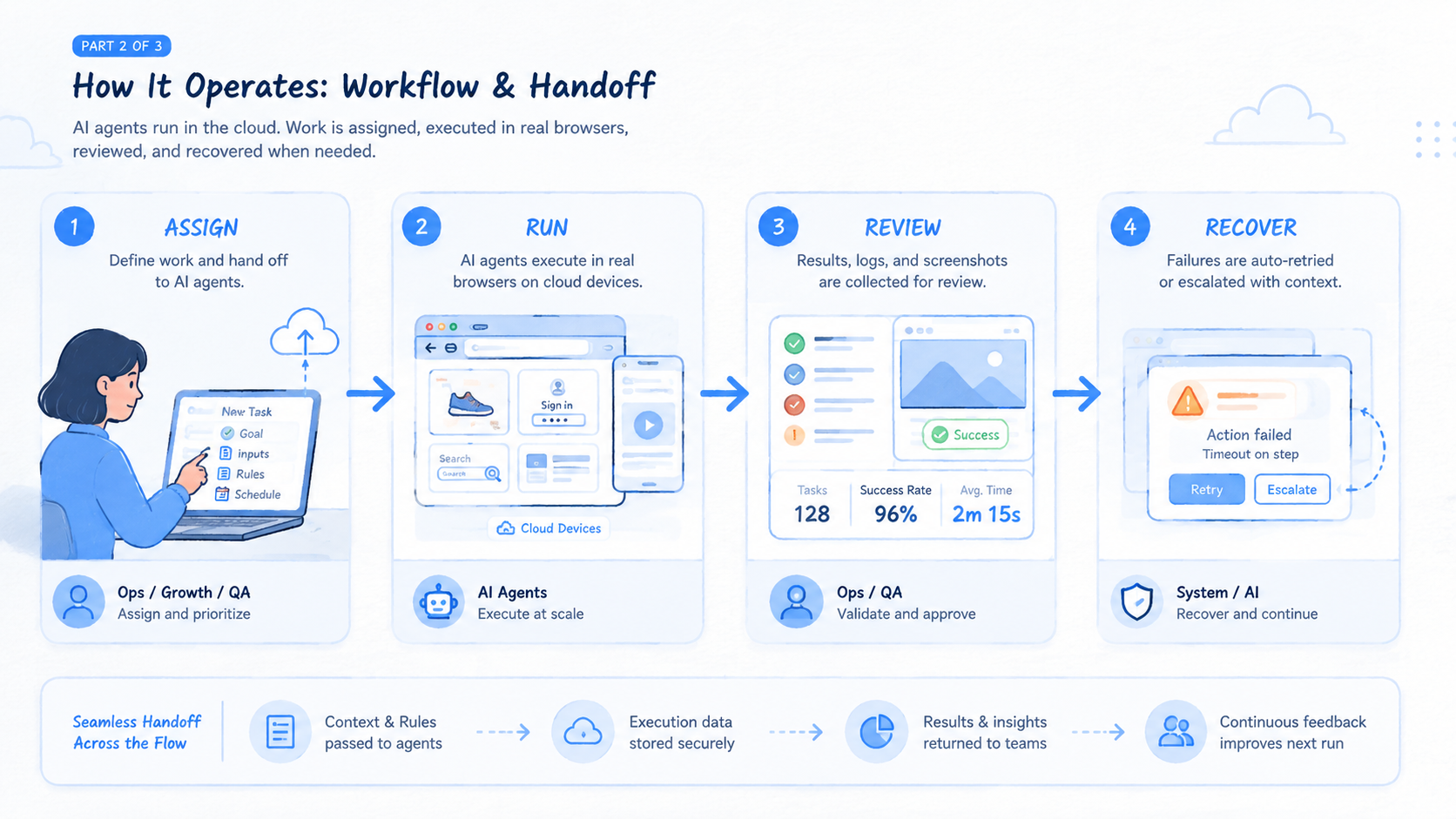

AI browser automation is a way to run online operations through controlled browser agents, repeatable workflows, and clear review rules. It helps teams move repetitive web tasks out of private tabs and into a shared execution process.

The new part is not the browser. Teams have used browser control for years. The change is that AI agents can now follow task instructions, read page context, summarize outcomes, and stop when a workflow moves outside the known path. That shift is useful only when the team also defines owners, routes, profile state, and recovery rules.

For operations leaders, the decision is practical. Can a browser agent reduce repeated clicks without making the result harder to trust? A good setup saves time because each task has a normal path, a stop path, and a record. A weak setup only creates faster confusion.

Browser automation should be judged like infrastructure. It must support capacity, handoff, review, and recovery. It should not be sold as magic. MoiMobi's position is similar across mobile work: execution at scale depends on clean environments, clear routing, and reusable workflows.

What Is AI Browser Automation?

AI browser automation means using an AI-assisted browser agent or automation layer to complete structured web tasks. The agent may open pages, click buttons, read content, fill fields, collect results, or write a status note. The team defines what the task is allowed to do.

The common myth is that an agent can simply "run online operations." Real operations are more specific. A team may need to check account status, download reports, monitor pages, update dashboards, or review queues. Each task needs a different rule set.

Browser automation works best when the workflow has clear boundaries:

- Known input

- Known website or tool

- Known browser profile or account lane

- Known output format

- Known stop conditions

- Known review owner

Those limits are not a weakness. They make the system safer to run and easier to improve. A task that stops at a new login prompt is better than a task that guesses through a sensitive step.

Browser tooling also has established technical roots. MDN describes WebDriver as a remote-control interface for user agents: MDN WebDriver. AI adds planning and interpretation, but the operational question remains the same: can the browser action be controlled, logged, and reviewed?

Why AI Browser Automation Matters for Online Operations

Online operations often fail at the handoff point. One person knows which account was checked, which warning appeared, and which dashboard was updated. Another teammate sees only a half-finished tab or a vague chat message.

AI browser automation matters because it can move repeatable work into a shared model. A task can start from a queue, use an assigned profile, run a known path, save output, and leave a note. That reduces the need for private memory.

The value is not only speed. Speed without evidence creates risk. The better goal is total effort reduction:

| Work area | Manual pattern | Better automation pattern |

|---|---|---|

| Account checks | Open pages one by one | Run a queued check with profile rules |

| Report pulls | Copy fields into a sheet | Extract fields into a standard row |

| Queue review | Scan items by memory | Flag exceptions with a review note |

| Handoff | Ask in chat | Read the task state and next action |

| Recovery | Recreate the failure | Review the log, state, and stop reason |

Playwright describes browser contexts as isolated environments with separate storage such as cookies and local storage: Playwright browser contexts. That concept is useful for operations too. When browser state is mixed, teams lose confidence in account work. Separation makes review easier.

The strongest teams do not automate every click in the first version, because broad scope hides weak task design and weak review habits. Stop there before scope grows.

They automate the predictable part, then route exceptions to a person with enough context to decide the next step. That balance protects time and judgment.

Key Benefits and Use Cases

The biggest benefit is clean repetition. A team can turn a recurring task into a named workflow with inputs, state, output, and review. That makes the work less dependent on the habits of one operator.

Use cases usually fall into five groups:

- Monitoring: check pages, dashboards, or account states on a schedule

- Reporting: pull values from web tools into a shared record

- Account operations: run checks across account lanes with profile rules

- Queue work: review items, label exceptions, and save notes

- Research support: gather structured page data for a defined question

A scenario makes the value clearer. A growth operations team reviews many account dashboards each morning. Manual work means opening dashboards, checking alerts, copying data, and asking a lead about unusual cases.

A browser agent can run the normal checks, record standard fields, and stop on unknown alerts. The team lead then reviews only the exception list.

Use a visible exception queue. Add the account lane, alert type, page reached, and next owner. A short note beats a long chat because the next person can act without asking for context.

That does not remove people from the loop. It moves people to the points where judgment matters. Operators still decide account policy, client response, and escalation across accounts with different value, age, risk, and history. The agent handles the repeated path.

Run the same pattern for report pulls. In that workflow, the browser opens the dashboard, reads fixed fields, saves a row, and stops on missing data. The reviewer checks odd values, not every normal field.

For teams managing account pools, the browser workflow should connect to multi-account management rules. Profile ownership, route policy, and account state need to match. Otherwise, automation may make messy work faster.

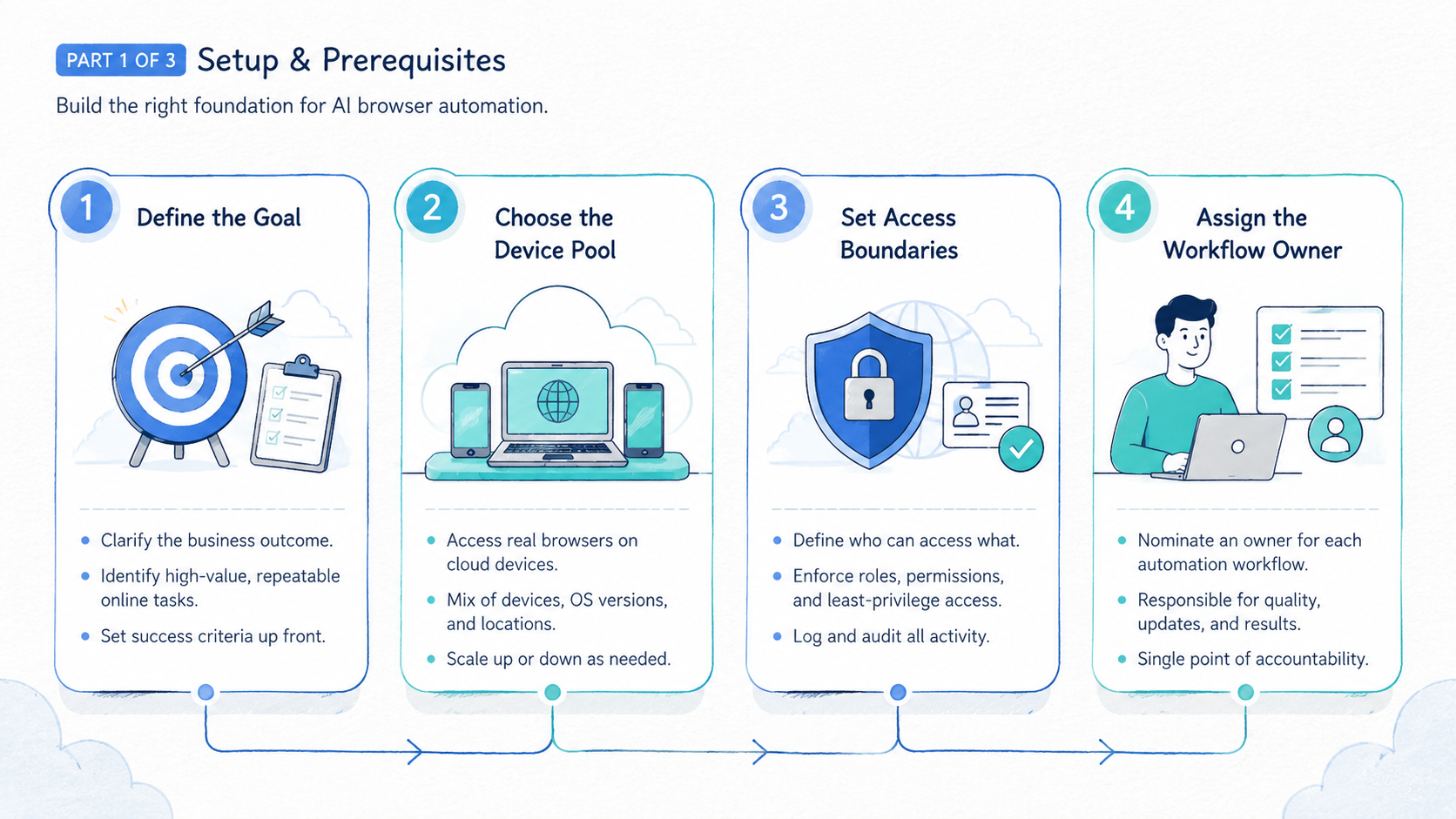

How to Get Started with AI Browser Automation

Start with checkpoints, not a broad agent prompt. A broad prompt hides weak workflow design. A checkpoint tells the team whether the workflow is ready to scale.

- Workflow checkpoint: one task lane is written in plain steps

- Profile checkpoint: each account or client lane has a defined browser state

- Output checkpoint: the result format is fixed before the run starts

- Stop checkpoint: new login prompts, unknown warnings, and changed screens pause the run

- Review checkpoint: one owner checks the first results and labels failures

- Recovery checkpoint: the team can return a failed task to a known state

Use a small pilot. Pick one task that is boring, frequent, and easy to verify. A weekly report pull, daily account health check, or queue review is a better first workflow than a complex campaign task.

Write the workflow like this:

| Field | Example |

|---|---|

| Task name | Daily account status check |

| Input | Account URL list |

| Browser state | Assigned profile per account lane |

| Normal path | Open dashboard, read status, record warning |

| Stop path | New verification, missing page, unknown warning |

| Output | Sheet row plus short note |

| Owner | Operations lead |

The stop path matters most. A browser agent should not improvise through a new verification screen or a high-impact action. Pause first. Review next.

Teams with mobile app work should also decide where the browser layer ends. Native Android app flows change the execution layer. A browser agent becomes only one part of the system. MoiMobi's mobile automation layer is better suited for app-side execution.

Add one handoff rule before scaling: the browser task must say whether the next action is web-side, mobile-side, or human review. That small field prevents operators from sending app work back into a browser queue. It also helps managers see which layer is carrying the workload.

Common Mistakes to Avoid

The first mistake is treating AI browser automation as a shortcut around process design. The agent still needs a task lane, browser state, output format, and stop rule. Without those pieces, every run becomes a one-off.

The second mistake is measuring only run speed. A task that completes in five minutes but takes thirty minutes to review is not efficient. Measure the full loop.

Avoid these failure modes:

- Running the agent across accounts without profile ownership

- Changing routes during a task without a rule

- Letting the agent continue after a new verification step

- Saving output without a timestamp or source

- Scaling before failure reasons are known

- Treating mobile app work as web work

- Hiding edge cases because they slow the demo

Google Search Central advises creators to focus on helpful, reliable content rather than content made only for search visits: Google Search Central. The same standard works for operations. Automate because the result is clearer and easier to trust, not because automation sounds modern.

Security claims need care. Browser automation does not make accounts safe by default. Use evidence. It can reduce internal mistakes when the workflow is well designed.

Platform rules, account quality, network history, content behavior, and review practices still matter.

A good failure review is short and plain. Use a short record instead of a long postmortem. Record the run ID, account lane, page reached, stop reason, owner, next safe action, and whether the account should pause before another run.

Do not bury this in a chat thread. Put it where the next operator can read it before touching the same account.

Who It Fits and When It Is a Strong Match

AI browser automation is a strong match for teams that already have repeatable web work. The task does not need to be exciting. It needs to be frequent, structured, and worth reviewing.

Strong fit

- Operations teams checking account status across dashboards

- Agencies managing many client web tools

- Growth teams pulling reports from repeated sources

- Support teams reviewing queues with clear labels

- QA teams checking role-based web states

- Teams that need better handoff notes

Weak fit

- Tasks that change shape every day

- Work that depends on high-stakes human judgment

- Native mobile app workflows

- Account systems with no owner or policy

- Teams that cannot review failed runs

- Workflows where the normal path is still unknown

When mobile execution matters, the fit changes. A browser can update a dashboard or start a web-side task. It cannot replace app-side environment control. Teams that need real mobile environments should compare browser work with cloud phone and device isolation layers.

Network routing also affects fit. Pause. A browser task may need a route that belongs to a specific account lane, client group, or region policy before the team adds more accounts. A proxy network should support the account policy, not act as a hidden variable.

The fit check should be repeated after the pilot. A task can start as a browser task and later reveal mobile, route, or account-state needs.

Treat that finding as a design signal, not a failure. It tells the team which execution layer should own the next version.

Pilot Rollout, Measurement, and Recovery Checks

A pilot should prove that AI browser automation reduces total team effort. Total effort includes setup, run time, review time, and recovery. Do not judge from a clean demo.

Track five numbers:

- Task time: minutes from start to saved result

- Review time: minutes needed to verify output

- Stop count: how many tasks paused for a known reason

- Error reason: login, page change, route, account state, missing field, or unclear instruction

- Recovery time: minutes to return to a known state

Use one workflow for the first pilot. Run it through a full cycle. If it is a daily task, run it for several days. If it is a weekly task, run at least one week.

Here is a simple pilot note:

- Run ID: dashboard-check-2026-05-07

- Account lane: client group B

- Normal results: 31

- Stopped results: 4

- Top stop reason: changed login screen

- Review owner: Sam

- Next fix: add a login-state check before data pull

Recovery is the real test. A team that can explain the failed state can improve the workflow. Reduce scope when no one knows what happened after a failed run or a broken handoff. Smaller pilots create better evidence.

Review the pilot in one meeting with operations and engineering together. Bring the run log, not opinions alone.

Operations can explain where handoff broke. Engineering can see whether the issue came from selectors, timing, page state, route setup, account state, or a bad stop rule. The result should be one next fix, not ten vague ideas.

Frequently Asked Questions

What is AI browser automation?

It is the use of AI-assisted browser agents and workflow rules to run repeatable web tasks. The team still defines the task, profile, output, review path, and recovery owner before a run starts.

Is it different from browser scripting?

Yes. Keep the split clear.

Browser scripts follow fixed steps. AI browser automation may add page reading, summaries, and exception handling across pages that do not always look the same. It still needs guardrails, output checks, and a human owner.

Do not skip that owner.

What should teams automate first?

Start with frequent web tasks that are easy to verify. Account checks, report pulls, queue review, and structured data entry are good first pilots.

Does it replace operations staff?

No. It removes repeated browser work. People still own policy, review, client judgment, and exception handling.

When should a browser agent stop?

It should stop on new login prompts, unknown warnings, changed fields, missing pages, or high-impact actions. Stop rules protect the workflow.

Can it support multi-account work?

Yes, if each account lane has a profile, owner, route rule, and review process. Without those rules, automation can increase confusion.

When is mobile automation a better fit?

Mobile automation is better when work happens inside native apps, requires mobile state, or depends on device-level behavior. Browser automation can support the web side.

What should a pilot measure?

Measure task time, review time, stop count, error reason, and recovery time. These numbers show whether the workflow saves real effort.

Conclusion

AI browser automation is a new way to run online operations when teams use it as an execution layer, not a shortcut. It works best for repeatable web tasks with clear inputs, known browser state, fixed outputs, and visible stop rules.

The practical next step is narrow. Pick one repeated workflow. Define the profile, route, normal path, stop path, output, owner, and review window.

Run a small pilot and measure the full loop before scaling. If the work crosses into mobile apps or device-level identity, pair the browser layer with mobile execution infrastructure instead of forcing one tool to cover every environment.