Key Takeaways

- Cloud phone provider comparison should start with workflow fit, not only device count or monthly price

- Business teams need to compare isolation, automation, routing, handoff, support, and reporting together

- Cloud phone alternatives can look similar in demos but behave differently under team operations

- A small pilot should test recovery quality, not only whether tasks can run once

- The best short list is the one that makes ownership and failure review clear

Cloud phone provider comparison is the process of evaluating remote Android providers by how well they support real business workflows. It should cover device access, isolation, automation, routing, team handoff, support, and reporting.

A provider is not just a place to rent Android capacity. For business teams, the provider becomes part of the operating system for mobile work. It affects how tasks are assigned, how account lanes stay separated, how failures are reviewed, and how new workflows are added.

That is the real choice when a provider demo looks good but the team's daily handoff still depends on memory.

The right comparison starts with the work. A QA team may need device coverage and build testing. A growth team may need clean account lanes and repeatable app actions. An operations team may need queues, status records, and recovery notes.

Name the work first, then let provider features prove whether they support that work under daily team pressure.

Start with the workflow.

The Core Idea Behind Cloud Phone Provider Comparison

The common mistake is comparing cloud phone providers as if every team needs the same thing. Device quantity matters, but it is only one part of the decision. A provider with a large pool can still be a poor fit if the team cannot manage ownership, routing, automation, or review.

Fit beats size.

The comparison should answer one question first: can this provider support the way the team actually works?

That question changes the evaluation. Instead of asking whether a provider has Android devices, ask whether it can support task lanes. Instead of asking whether it has automation, ask whether automation can stop safely and leave useful records. Instead of asking whether setup is fast, ask whether a new operator can understand the environment after handoff.

Handoff proves fit.

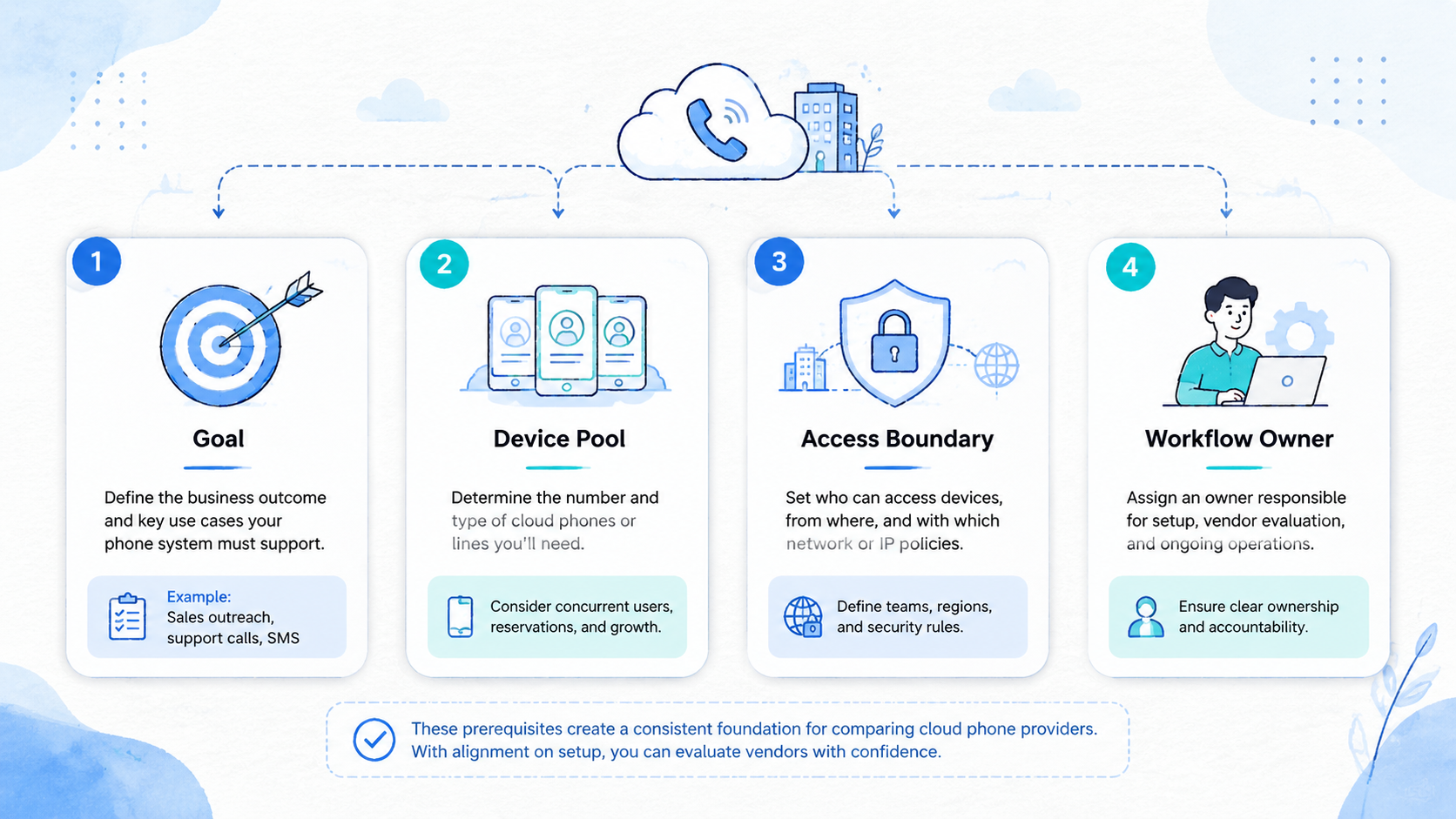

Business teams should compare at least six dimensions:

| Dimension | What to check | Why it matters |

|---|---|---|

| Device access | Remote Android availability and session control | Work needs enough capacity |

| Isolation | Separation between device, account, and task lanes | Mixed state creates confusion |

| Automation | Repeatable actions, triggers, and stop rules | Scripts need control and review |

| Routing | Network and region policy support | Routing should match workflow intent |

| Team handoff | Owners, notes, status, and task records | Operators need continuity |

| Support | Response process and troubleshooting path | Recovery needs a clear owner |

Google Search Central recommends helpful, reliable content that serves people first: Google Search Central. The same discipline applies to provider selection. Compare what helps the team operate, not what sounds broad in marketing copy.

Why Teams Search for This Topic

The myth is that cloud phone alternatives differ mainly by price, device count, or surface features. Those details matter, but they rarely explain operational fit alone. A cheap device pool can become expensive if every exception requires manual detective work.

Cheap can still be slow.

Use that idea when a low-cost option shifts review work back to operators, managers, or private chats after each exception.

The reality is more specific. Teams search for comparison guidance when they already feel friction. Operators may share devices without clear ownership. Account states may drift.

Drift creates rework because each operator must first discover what changed before any useful task can start.

Automation may run through unknown states. Logs may live in one person's local folder.

Local notes do not scale.

Put the next action in the shared task record so another person can continue without replaying the whole day.

That friction turns provider choice into a workflow decision. A business team does not only need remote phones. It needs a way to run mobile tasks with enough consistency that another teammate can continue the work.

The record matters.

Consider a simple scenario. A team runs daily app checks across 12 cloud phones, 3 account lanes, and 2 operators. The provider must let the team assign devices, separate lanes, check status, and recover from app-state issues. If the provider only gives raw access, the team must build or manually enforce the rest.

Test that.

This is where cloud phone evaluation should connect to execution infrastructure. The provider should make the task easier to run, review, and repeat.

Who Benefits Most and In What Situations

The strongest fit is a team that runs recurring mobile workflows. One person testing a small app may not need a deep provider comparison. A business team with multiple operators, accounts, regions, or app workflows does.

Team shape changes the bar.

Three groups need a more careful comparison.

- Operations teams need assigned lanes, device status, task notes, and recovery records

- QA teams need repeatable environments, app install checks, logs, and controlled test runs

- Growth teams need account separation, routing discipline, and handoff clarity

Provider choice also matters when the team is comparing a ugphone alternative, vmos alternative, or vmos cloud alternative. Those searches can signal that the current setup is no longer enough. The better question is not "which tool is similar?" It is "which provider supports the next workflow without hiding risk?"

Similarity is not the goal.

For teams that operate device pools, a phone farm model may fit better than scattered single-device access. The value comes from managing capacity as a system. Device pools need labels, owners, status, and retirement rules.

Scale changes this.

Labels keep work clear when the device pool grows beyond what one person can remember accurately.

The not-fit case is a team that has no written workflow. If nobody can describe the task, provider comparison will become guesswork. Write the process first.

Process comes before tools.

Cloud Phone Provider Comparison Criteria for Business Teams

Use a scorecard before watching demos. Demos are useful, but they can hide the hard parts. A scorecard forces the team to compare providers against the same workflow.

Score the same task.

Use the table below as the first pass before you ask for a product tour.

Use pass, concern, or fail for each line. Avoid vague scores at first. A provider either supports the first workflow cleanly, creates a concern, or fails the requirement.

Keep the labels plain.

Here is a simple evaluation view:

| Criterion | Pass | Concern | Fail |

|---|---|---|---|

| Workflow fit | Runs the named task cleanly | Needs manual side notes | Task cannot be completed |

| Isolation | Lanes are clear | Some shared state remains | Ownership is unclear |

| Automation | Stops on exceptions | Needs custom guardrails | Runs through unknown states |

| Handoff | Next operator can continue | Extra chat needed | Original operator must explain |

| Recovery | Failure reason is visible | Reason needs digging | Failure is hard to trace |

This scorecard makes the short list more honest. It also reduces the chance that a broad feature list beats a better operational fit.

Short lists need proof.

Add one evidence review before the demo ends. Ask the provider to show where a failed task appears, who can see it, and what record remains after the session closes. A clean answer does not need to be complex. It needs to show status, owner, failure reason, and next step.

Ask to see the record.

Google's SEO Starter Guide recommends making websites helpful for users and easy to understand for search engines: Google Search Central SEO Starter Guide. Provider selection follows a similar discipline. Make the operating record clear for humans first, then connect tools around that record.

How to Evaluate or Start Using a Provider

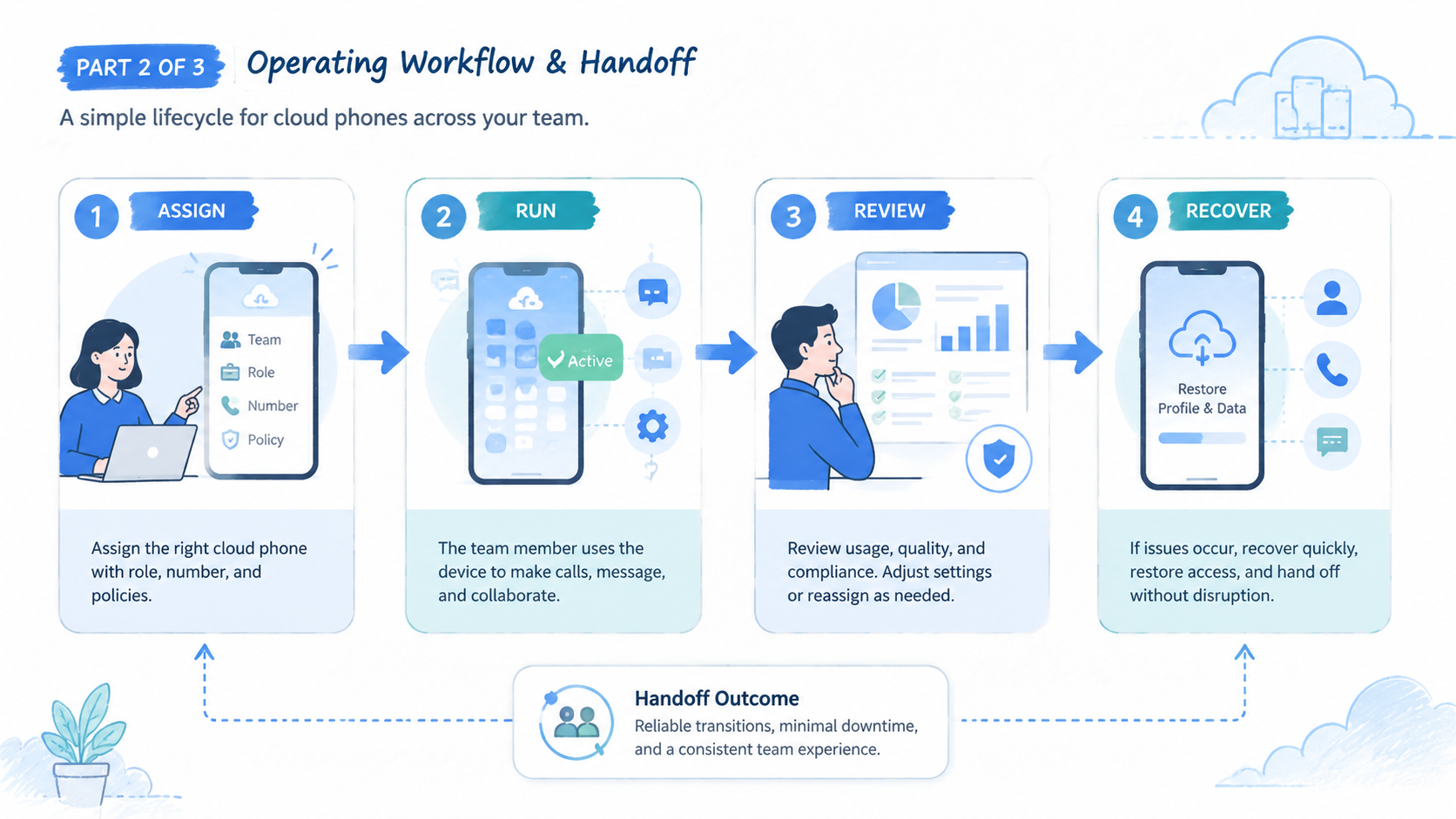

Do not start by migrating every mobile workflow. That creates too many variables. Begin with a pilot task that already has business value and limited risk.

Small tests reveal more.

Use this sequence:

- Pick one workflow: choose a task such as account dashboard review, app QA check, content verification, or daily readiness check

- Define the device unit: decide whether one device maps to one account, one region, one operator, one app, or one task lane

- Set required fields: device ID, owner, route group, account lane, last action, status, and next action

- Run the task manually first: confirm the process is clear before adding automation

- Test provider controls: check assignment, isolation, session stability, and status visibility

- Add limited automation: automate only the parts that have known inputs and clear stop rules

- Review failures: tag each failure as device, route, app state, account state, input, or operator issue

Teams planning mobile automation should be especially strict here. Automation works best when the starting state is known. Unknown app screens, login prompts, and missing inputs should stop the workflow.

Provider evaluation should include support and recovery. A provider may look strong during setup but weak during troubleshooting. Ask how failures are surfaced, how device status is checked, and how the team can preserve evidence for review.

Recovery is part of fit.

Use one rule: the pilot is not successful until a second operator can recover a failed task from the record.

One person is not enough.

Also test a normal handoff, not only a failure. Ask one operator to start the task, record the state, and stop before the final action. Then ask another operator to continue from the record. If the second operator needs a private explanation, the provider setup is not ready for wider use.

Private context is a warning.

That handoff test often exposes missing fields. Common gaps include route labels, device owner, account lane, last successful action, and stop reason. Fix those fields before comparing more providers.

Fix the fields first.

Add them to the task template, not to a private checklist that only one operator remembers during a busy shift.

A concrete pilot can stay small: 12 devices, 3 lanes, 2 operators, 1 queue owner, and a 7-day review window. Record 5 required fields for every task: owner, device ID, lane, last action, and stop reason. That is enough to expose most handoff gaps.

Keep the pilot tight.

Cloud Phone Provider Comparison Fit Boundaries and Mistakes

Provider comparison becomes weaker when teams compare brands before comparing workflow boundaries. A provider can be strong for one team and wrong for another. The deciding factor is the work pattern.

The pattern decides.

Good fit signals include:

- The workflow repeats on a schedule

- Device ownership can be named

- Account or task lanes need separation

- Operators need clean handoff

- Automation has narrow, known steps

- Failure review matters to the business

Warning signs deserve equal attention:

- Devices are shared without notes

- Routes change without a record

- Operators fix failures privately

- Automation retries unknown states

- The provider demo does not show recovery

- The team cannot name the first pilot workflow

For account-heavy workflows, multi-account management should be part of the evaluation. Device access alone does not solve account ownership. The provider model should support clear boundaries and review.

Device separation also needs explicit review. Device isolation is relevant when teams need clean environments for account lanes, app testing, or operator handoff. Do not treat isolation as an afterthought.

The mistake to avoid is buying capacity before defining control. Capacity without control creates more places for state to drift.

Control comes first.

Without that order, a bigger device pool only gives the team more places to lose context.

One extra fit boundary is support depth. A provider may expose device access but give little help when the team needs to trace a failed workflow. Ask who owns troubleshooting across device state, route state, automation state, and account lane notes.

Support must be traceable.

The official Android Developers site is a useful reminder that mobile tooling has separate layers: platform APIs, app behavior, device state, and developer tools all have separate jobs: Android Developers. A cloud phone provider should make those layers easier to operate, not blur them into one unclear dashboard.

Pilot Measurement and Recovery Review

A pilot should measure whether the provider improves reliability. It should not only measure whether the provider can run a task once.

One clean run is not proof.

Track six fields during the pilot:

- Task completion rate

- Number of unclear stops

- Recovery time

- Handoff success

- Manual side notes required

- Provider support issues

Use a recovery table for every failed run:

| Failure label | Example | Recovery owner |

|---|---|---|

| DEVICE | Session unavailable or unstable | Platform owner |

| ROUTE | Route mismatch or unclear policy | Network owner |

| APP | Unexpected app state | Workflow reviewer |

| ACCOUNT | Account login or warning state changed | Account owner |

| INPUT | Missing task data | Queue owner |

| OPERATOR | Step missed or entered incorrectly | Team lead |

This table keeps the review grounded. It also shows whether a provider problem is actually a process problem. That distinction matters before signing a larger contract or moving more workflows.

Sort the cause first.

The final pilot question is practical: can the team explain every stopped task without asking the original operator? If yes, the provider may support scaled work. If no, improve records, ownership, or stop rules before expanding.

Explain stops in writing.

The stopped task should show what happened, who owns the next check, and which field needs to change before retry.

Run the same review after one week. A provider may pass the first demo and still fail during repeated team use. Weekly review shows whether notes stay current, whether operators follow the same stop rules, and whether recovery time improves.

Repeat use is the test.

Keep the pilot small until the review is boring. Boring means failures are labeled, owners are clear, and the next action is visible.

Set it early.

Set a local pass bar before the pilot starts. One example is 90% task completion, zero unclear stops, and recovery notes for every failed run. The exact number can change by workflow, but the bar should be written before the test.

Write the bar down.

Do it before the demo.

Frequently Asked Questions

What is cloud phone provider comparison?

It is the process of comparing cloud phone providers by workflow fit, isolation, automation control, routing, handoff, support, and recovery quality.

Are cloud phone alternatives mostly the same?

No. Providers may look similar at the access layer, but business fit depends on workflow control, records, isolation, and support.

When should a team look for a ugphone alternative?

Look when the current setup cannot support the team's workflow, handoff, automation needs, or recovery process. Do not switch only because another tool has a longer feature list.

Use the workflow gap as the filter.

Compare the current task failure, the missing field, and the next operator handoff before naming any replacement.

When should a team look for a vmos alternative?

Look when the team needs a different operating model for remote Android work. Focus on the workflow gap, not only the brand comparison.

Brand names should come after the task model, not before it.

That order keeps the comparison tied to work instead of vendor labels, feature pages, or old habits.

What should business teams compare first?

Compare workflow fit first. Device access, automation, routing, and support should be judged against one named task.

One task keeps the comparison fair.

Is price the most important factor?

Price matters, but it should not be isolated from labor, failure recovery, and operational overhead. A cheaper setup can cost more if handoff fails.

Count the review time too.

How many providers should be tested?

Test a short list. Two or three serious options are easier to evaluate than a broad list with shallow notes.

What makes a pilot successful?

A successful pilot produces clear task results, understandable failures, recoverable handoff, and a decision the team can explain.

Conclusion

A practical ranking should put operational fit first. Begin with the workflow, then compare isolation, automation control, routing policy, handoff, evidence capture, and support. Device count and price still matter, but they should not lead the decision alone.

Lead with fit.

Use a practical priority order: first define the task, then define the device unit, then test the provider, then review failures. A provider that makes recovery clearer is more valuable than one that only looks fast in a clean demo.

Clear recovery wins.

The next step is a small pilot. Pick one workflow, write the fields that must be visible, test two or three providers, and record every stopped task. If a second operator can continue the work from the record, the provider is ready for a wider evaluation.